Neural network

Artificial Neural Networks (ANN) are used as an adaptive, 'parallel' computing strategy. They first appeared in the artificial intelligence (AI) scene in the late 1950s.

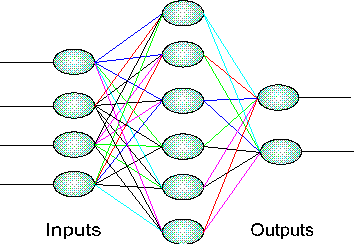

Modeled after the neuron-based wiring in biological brains, ANNs are merely composed of connections, or 'links', with different weights, or 'synaptic strengths' that determine if the succeeding artificial neurons will fire or not. Though ANNs can have many inputs, they usually have just one output; and thus they are well equipped to answer a 'yes or no' type question.

Each node of the ANN has a different numerical 'weight', which it adds or subtracts from the value its parent nodes (presynaptic neurons) have given it. The product is then passed on to subsequent nodes which add or subtract their weight, and so on.

ANNs are helpful for solving problems that have complex causes and simple effects (yes/no). In other words, though the system's designer may only know some of what types of input the ANN will receive and what the output should be, he may not be able to design any algorithm because the problem is too convoluted.

Often ANNs begin by creating a network of nodes that are initialized with random weight values. Then, they are trained by a user, or another ANN, who inputs the desired input output combination and the ANN adjusts the weights accordingly. Some ANNs are 'self-adjusting', and use feedback loops to adjust their weight values.

A NeuralNetwork is a good paradigm for a specific type of problem (many causes, few effects), but for more robust and practical AI problems, ANNs fall short.

Pictured is a generic ANN representation.